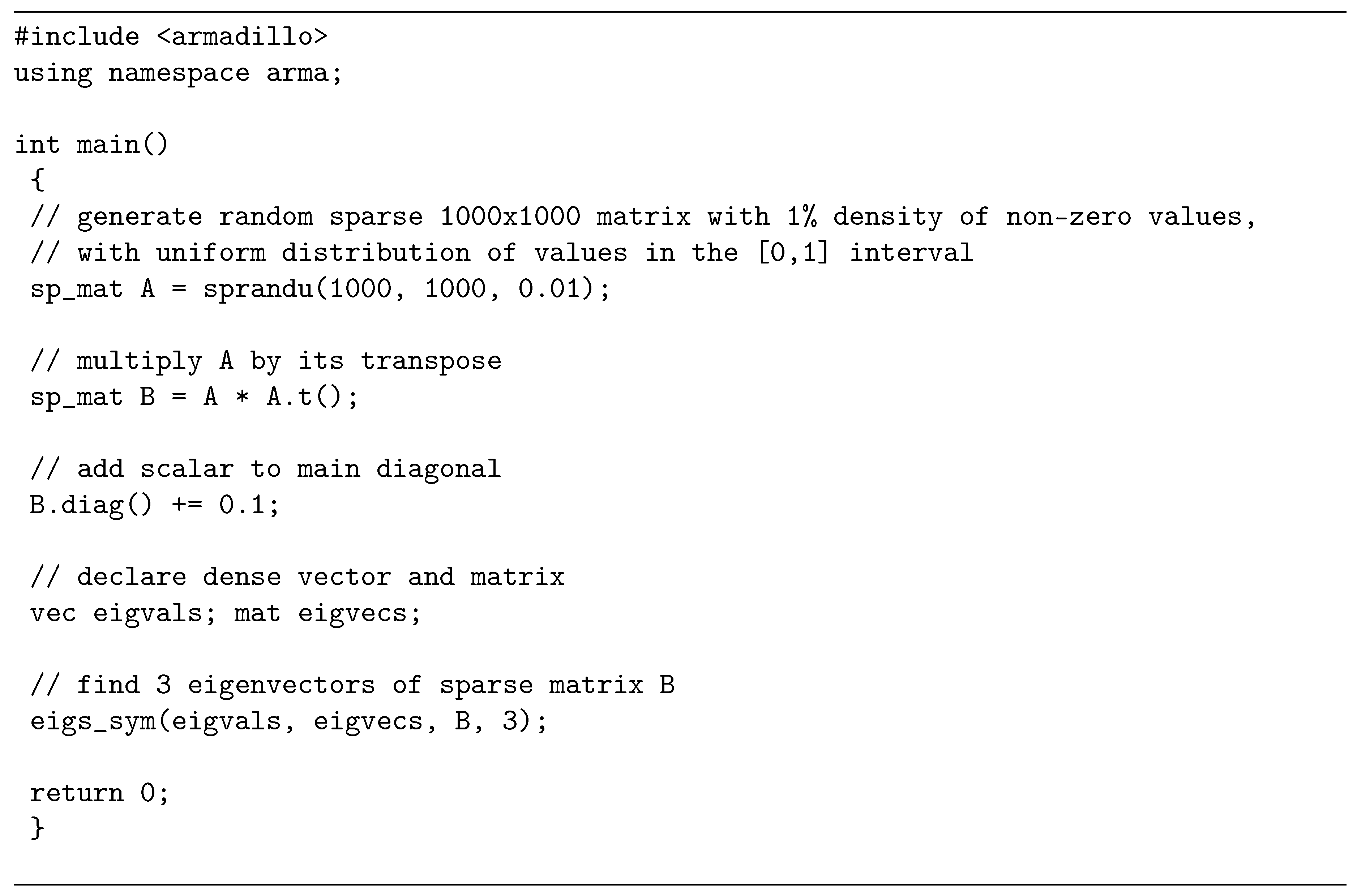

So here is an example for 2×2 kernel and 3×3 input. I am pretty sure this is hard to understand just from reading. The construction of the Pt matrix is expensive and the improvement obtained is not very big, but if the multiplication has to be done many times, it may be worth it. You compute a multiplication of this sparse matrix with a vector and convert the resulting vector (which will have a size (n-m+1)2 × 1) into a n-m+1 square matrix. In this case I would like to submit the following proposal to you: function my_mul(Qt,y) I suppose this only has to be done once while multiplication by x has to be done a very large number of times. In this way you do not count the time necessary for the construction of the matrix M and of the vector b. Which now has the same runtime as the full sparse product, but still allocates a little too much. SparseArrays.fkeep!(X.rez, (i,x) -> x>X.tol) Return function proj!(x,X::SparsePositivit圜onstraint) To reduce computation and storage requirements, SpMV. In SpMV, the operation yAx+y is performed, where is a sparse matrix and are dense vectors. = x + X.P*x + X.q # This lines takes 70% of runtime, due to the sparse matrix-vector product. Sparse matrix-vector multiplication (SpMV) is a fundamental performance bottleneck in iterative methods for solving large-scale linear systems, eigenvalue problems, and least squares problems. It is one of the most widely used high-performance kernels in various applications, including data mining, and machine learning, especially the Graph Neural Networks (GNN) 1, 2. Return function proj!(x,X::SparseLinearConstraint) Sparse Matrix Multiplication (SpMM) is a sparse matrix dense matrix multiplication as follows: C AB where A is sparse and B, C are dense. A number of techniques, such as increasing utilization of wide vector units, reducing load imbalance and selecting the best formats, have been developed. Here is a (quickly-extracted so not that clean) MWE: using SparseArraysįunction SparseLinearConstraint(A::Matrix) where T AbstractWith the extensive use of GPUs in modern su-percomputers, accelerating sparse matrix-vector multiplication (SpMV) on GPUs received much attention in the last couple of decades.

The number of multiplications is reduced, but, you are right, the indexing is still the same. Hence the matrix-vector product fatorizes. I do not think you understood the predicate I use and the structure of the matrix: For each line of the matrix, every non-zero-value is the same value. This should have the same number of multiplications as a regular sparse matrix multiplication, since you’re taking the indices per row from the sparse matrix anyway. This is accomplished by searching the number of singular values greater than a given tolerance.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed